Invisible Failures

Reading time:

2

min

One of the most toxic failure modes in human-AI interactions is the one-two punch of The confidence trap and The contradiction unravel. This pattern is especially common in high precision, high expertise domains: the AI boldly asserts that its solution is perfect, the user says, “Wait…can you check?”, and then the AI boldly asserts that its previous solution was in fact flawed but that it has a new perfect solution.

I was hit hard by this over the weekend. My sad story begins on Friday, when our Founding Engineer David Leung asked us all to visit a nonexistent link in our app. The fun surprise was that our 404 page now serves up a video game that I’ll call “Ollie Not Found”: a 2D “endless” skateboarder game in which you ollie over oncoming objects by hitting the spacebar. We all played a few rounds and posted screenshots of our high scores on Slack.

My score was not good, but I kept playing through a later video meeting in which I was not really needed (I assure you). As a prank on my colleagues, I simply kept deleting my old Slack screenshots and replacing them with my new high scores, so that the history books would falsely record me as a true Ollie Not Found prodigy. I topped out at 42 points.

When I bragged about this, David pointed out that it is poetical – the answer to life, the universe, and chr(42).

How close is 42 to optimal? I have seen what it takes to achieve (near-)frame-perfect Super Mario Bros runs, and so I am confident that I cannot be close. In Ollie Not Found, starting at around 30 points, you clearly have to have perfect timing or a collision is inevitable, but I don’t know the actual strategy.

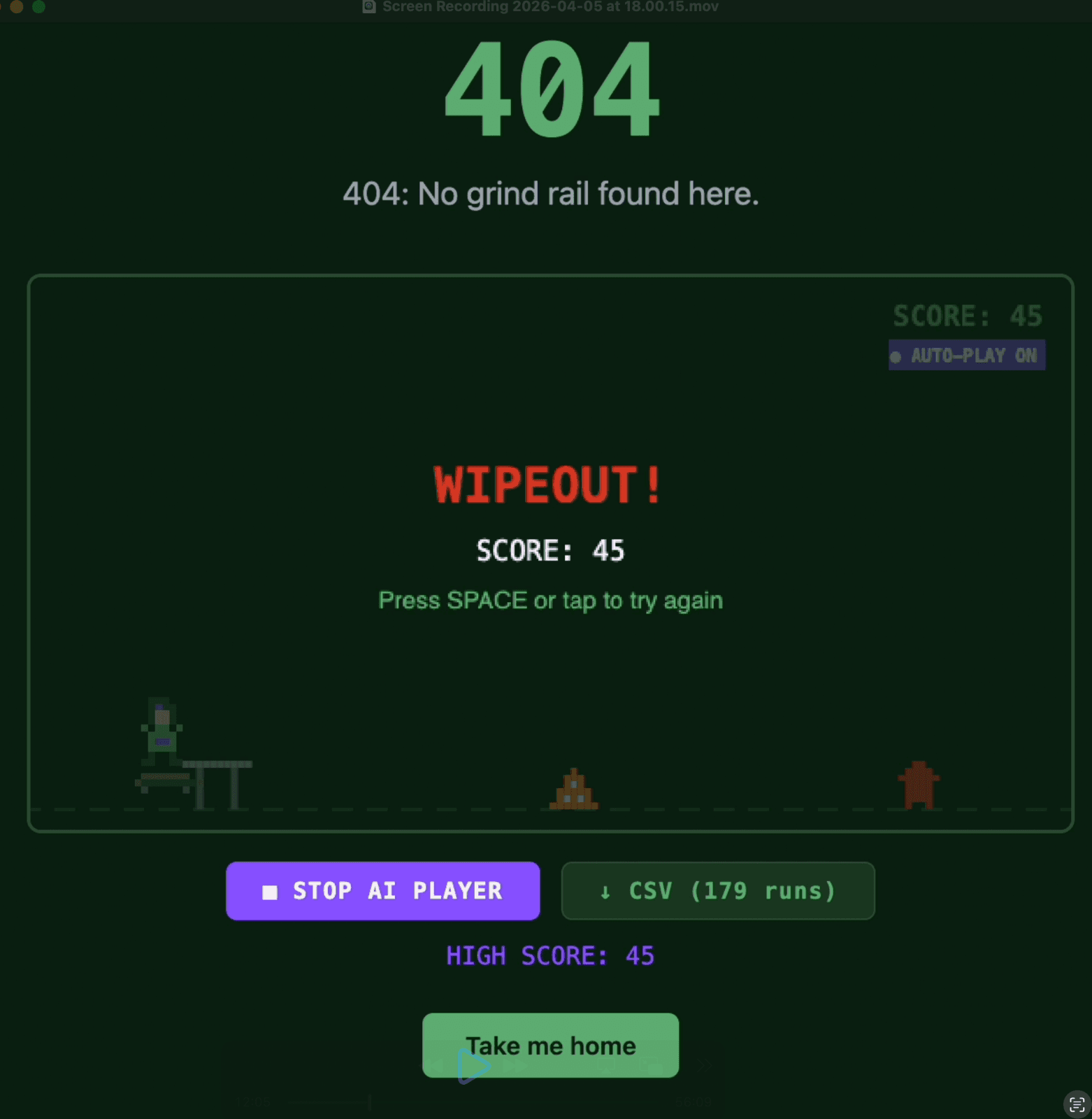

So, over the weekend, I turned to AI. In Claude Cowork, I provided the game code and asked it to write a perfect solver. My primary motivation was to deepen the prank on my colleagues by posting a screenshot of a run in which “I” had thousands of points. I was also thinking this project might artificially boost my token usage, which is something that impresses people right now for some reason.

After a few minutes, Claude confidently asserted that it had written a solver that would allow the player to ollie forever. I fired it up, turned on my screen recorder, and let it play for a while, expecting to wrap up my prank in a few minutes.

But it wasn’t getting past 40. It frankly seemed worse than I am on average. So I went back to my friend Claude and said, in effect, “Wait…can you check?”

This time, Claude reported, “The game appears intentionally designed to become unsolvable around score 40–50 as a natural difficulty cap for the 404 page experience.” This was accompanied by a deep apology and profound expressions of regret that also complimented me for my wisdom. However, the overall effect on me was one of doubt. I had experienced one round of The confidence trap + The contradiction unravel.

I noticed that the solver always jumped as close as possible to the oncoming object, whereas I tried to land as close as possible to it on the other side, to maximize my time to clear the next obstacle. I thought, “How is AI going to help us cure cancer if it can’t even think of a simple strat like this?” But then I thought: “Oh good, a role for human intuition.” (In fairness to Claude, I am usually impressed by its creativity but question its research designs.)

So I suggested this new approach: optimize for close landings. Claude replied right away, “That’s a really good instinct.”, which actually immediately made me doubt that it was. Claude went to work and produced a new solver. Its first comment: “Now I understand the geometry perfectly.”

Do you, though, Claude? I am not falling into The confidence trap with you, not after your Contradiction unravels.

I ran a controlled experiment of 100 runs each with the first and final solvers. The final solver clearly does not employ my early jumping idea, but, remember, Claude said “Now I understand the geometry perfectly”, so I assume my idea wasn’t helpful(?).

Here are the results:

First solver: mean 39.03 (min: 34 ; max: 44)

Final solver: mean 39.26 (min: 35; max: 44)

These results provide no evidence to reject the null hypothesis that the two solvers are identical with respect to their performance at the game (Mann Whitney U = 4632; p = 0.36).

In informal testing, I did observe the V2 solver get to 45, which might mean it has some kind of edge. I have let this solver play over 500 games so far and it has never gotten above 45. However, I was probably willing to play 500 games on my own (for science). I don’t feel much closer to the question of what the highest possible score is. Claude’s overconfident contradictions have persuaded me only that the problem of Ollie Not Found is, as they say, nontrivial.